More On: algorithm

NASA's Perseverance rover lands on Mars in 18 days

Which COVID-19 personality are you?

Why do ‘Kevins’ vote for far-right parties?

Who is most at risk for work addiction?

There’s no way we could stop a rogue AI

A new theory suggests that dreams' illogical logic has an important purpose.

For a while now, the leading theory about what we're doing when we dream is that we're sorting through our experiences of the last day or so, consolidating some stuff into memories for long-term storage, and discarding the rest. That doesn't explain, though, why our dreams are so often so exquisitely weird.

A new theory proposes our brains toss in all that crazy as a way of helping us process our daily experiences, much in the way that programmers add unrelated, random-ish nonsense, or "noise," into machine learning data sets to help computers discern useful, predictive patterns in the data they're fed.

Overfitting

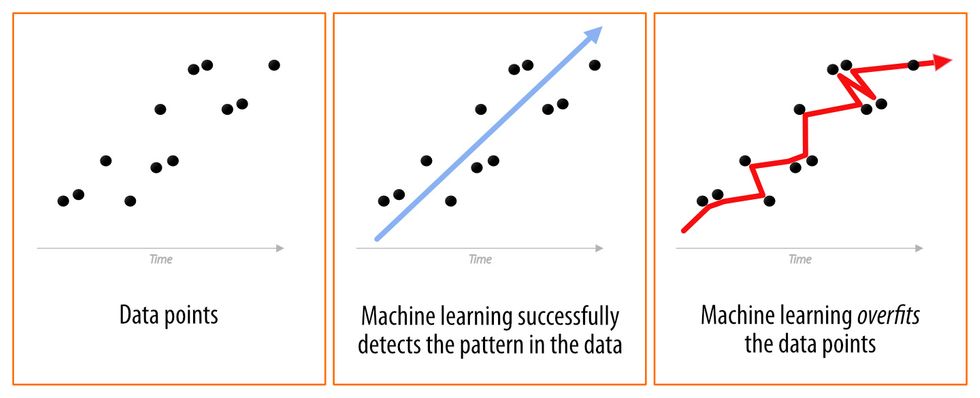

The goal of machine learning is to supply an algorithm with a data set, a "training set," in which patterns can be recognized and from which predictions that apply to other unseen data sets can be derived.

If machine learning learns its training set too well, it merely spits out a prediction that precisely — and uselessly — matches that data instead of underlying patterns within it that could serve as predictions likely to be true of other thus-far unseen data. In such a case, the algorithm describes what the data set is rather than what it means. This is called "overfitting."

Big Think

The value of noise

To keep machine learning from becoming too fixated on the specific data points in the set being analyzed, programmers may introduce extra, unrelated data as noise or corrupted inputs that are less self-similar than the real data being analyzed.

This noise typically has nothing to do with the project at hand. It's there, metaphorically speaking, to "distract" and even confuse the algorithm, forcing it to step back a bit to a vantage point at which patterns in the data may be more readily perceived and not drawn from the specific details within the data set.

Unfortunately, overfitting also occurs a lot in the real world as people race to draw conclusions from insufficient data points — xkcd has a fun example of how this can happen with election "facts."

(In machine learning, there's also "underfitting," where an algorithm is too simple to track enough aspects of the data set to glean its patterns.)

Credit: agsandrew/Adobe Stock

Nightly noise

There remains a lot we don't know about how much storage space our noggins contain. However, it's obvious that if the brain remembered absolutely everything we experienced in every detail, that would be an awful lot to remember. So it seems the brain consolidates experiences as we dream. To do this, it must make sense of them. It must have a system for figuring out what's important enough to remember and what's unimportant enough to forget rather than just dumping the whole thing into our long-term memory.

Performing such a wholesale dump would be an awful lot like overfitting: simply documenting what we've experienced without sorting through it to ascertain its meaning.

This is where the new theory, the Overfitting Brain Hypothesis (OBH) proposed by Erik Hoel of Tufts University, comes in. Suggesting that perhaps the brain's sleeping analysis of experiences is akin to machine learning, he proposes that the illogical narratives in dreams are the biological equivalent of the noise programmers inject into algorithms to keep them from overfitting their data. He says that this may supply just enough off-pattern nonsense to force our brains to see the forest and not the trees in our daily data, our experiences.

Our experiences, of course, are delivered to us as sensory input, so Hoel suggests that dreams are sensory-input noise, biologically-realistic noise injection with a narrative twist:

"Specifically, there is good evidence that dreams are based on the stochastic percolation of signals through the hierarchical structure of the cortex, activating the default-mode network. Note that there is growing evidence that most of these signals originate in a top-down manner, meaning that the 'corrupted inputs' will bear statistical similarities to the models and representations of the brain. In other words, they are derived from a stochastic exploration of the hierarchical structure of the brain. This leads to the kind structured hallucinations that are common during dreams."

Put plainly, our dreams are just realistic enough to engross us and carry us along, but are just different enough from our experiences —our "training set" — to effectively serve as noise.

It's an interesting theory.

Obviously, we don't know the extent to which our biological mental process actually resemble the comparatively simpler, man-made machine learning. Still, the OBH is worth thinking about, maybe at least more worth thinking about than whatever that was last night.